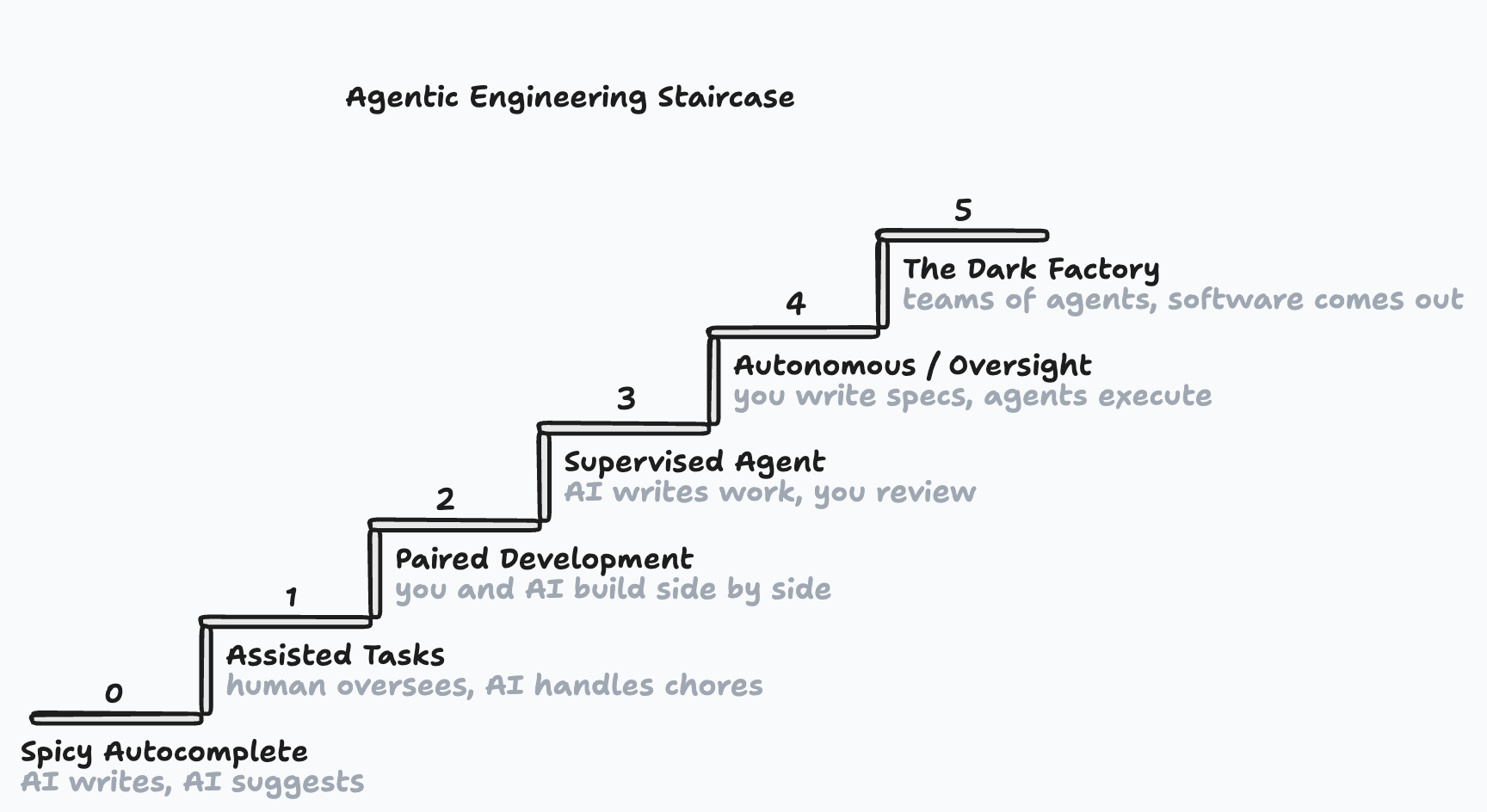

The Five Levels of Agentic Engineering: Abstraction and Process Tooling

This has all been inspired/adapted from Dan Shapiro's framework.

I've renamed the levels a bit.

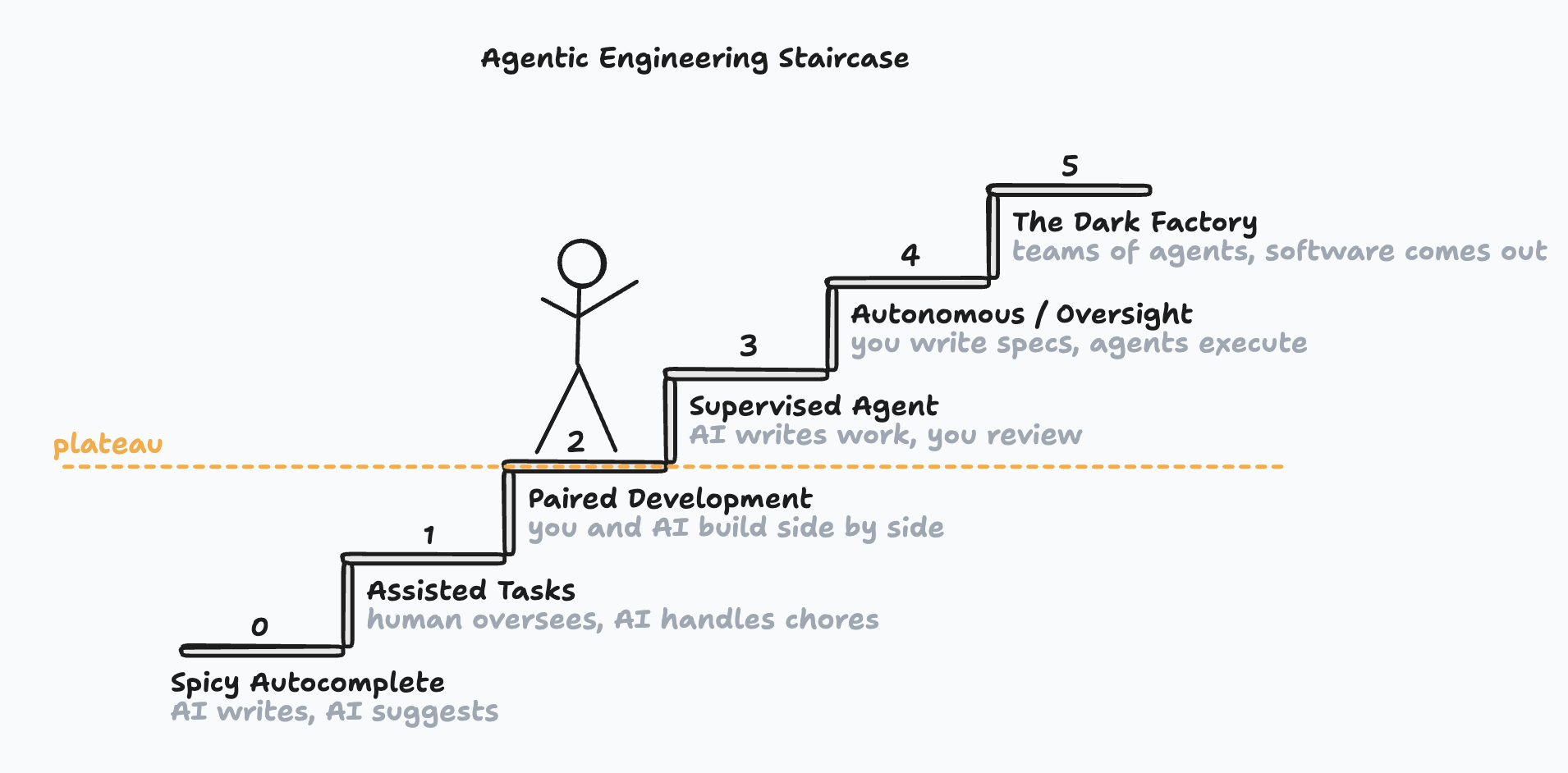

On level 2, Dan claims, "This is where 90% of 'AI-native' developers are living right now."

And I've been seeing the same in my work.

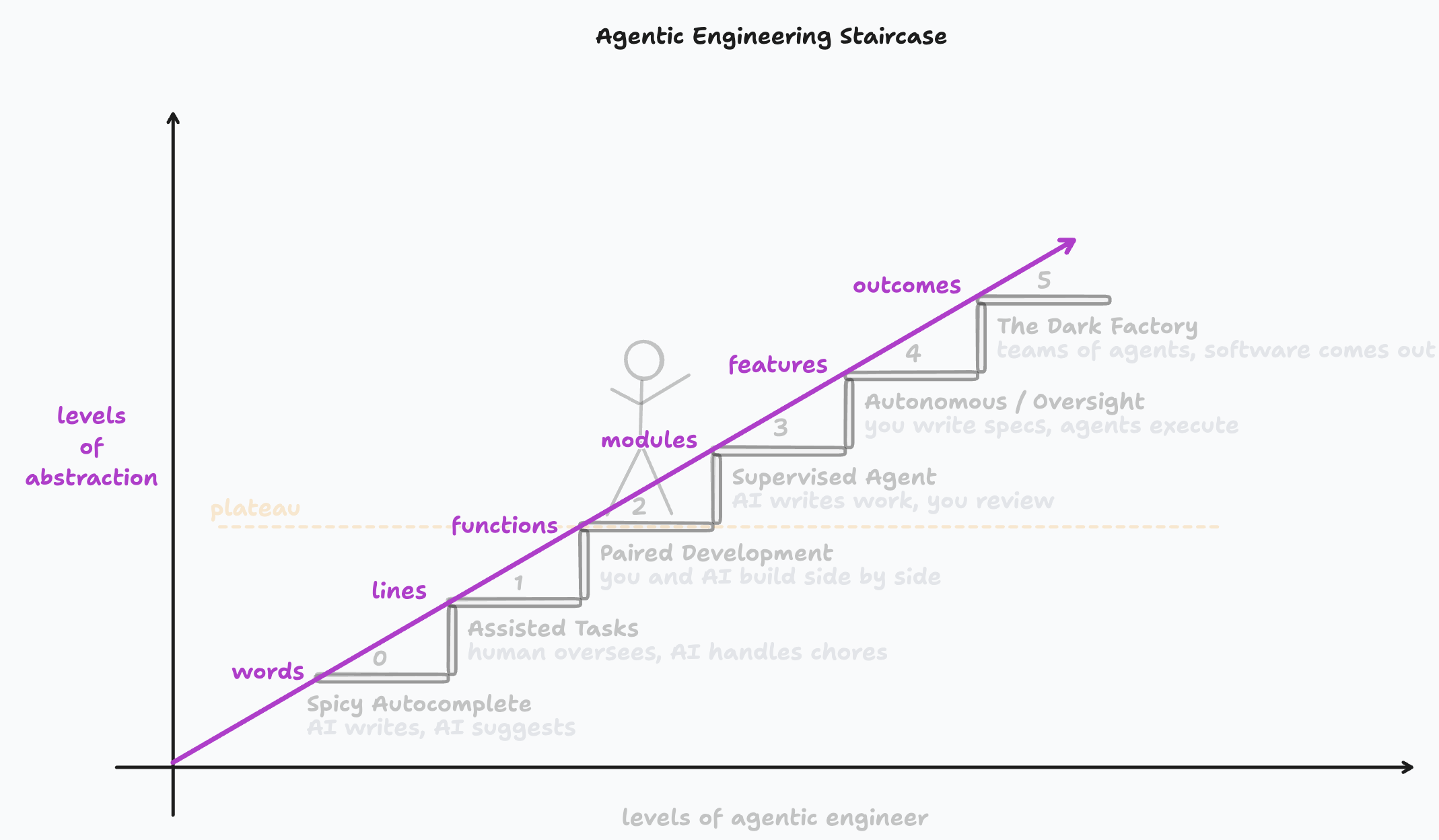

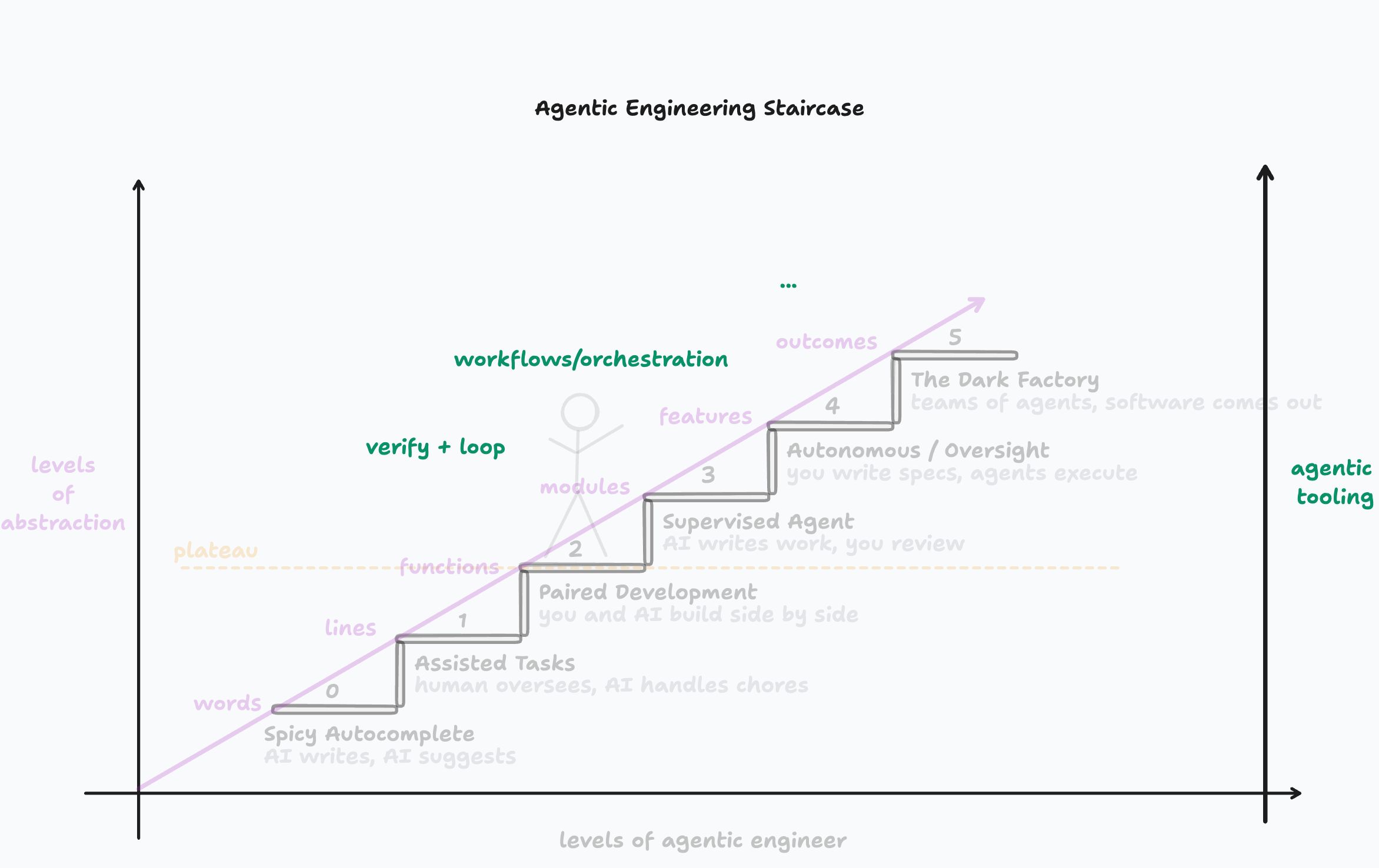

I wanna dig in here on why I think that is. It has to do with verification (control and trust). And we can see why our usual ability to verify code outputs are threatened by looking at the level of abstraction we are working on at each level.

Level → abstraction level:

Spicy Autocomplete → Words: You are writing each character and word, you are boosted by the AI agent writing characters and words that it predicts you want!

Assisted Tasks → Lines of words: You are writing out all core logic, the AI agent writes lines of boilerplate code.

Paired Development → Functions: You are pair programming with the AI, asking for things at the function and component level. The AI is then writing function bodies, components, unit tests, and helping with more core logic. Any mistake that AI makes, you catch, and prompt it to do otherwise. When we, as engineers, are thinking code we are often thinking about the functions we need, the capabilities that must exists, the object shapes/schemas. That's one reason why this level is comfortable. The other is our trust - we've worked so hard on our internal ability to think through the consequences of code as we scan and read thru it. We think that if we are not considering every line, then we'll lose the ability to confirm that the code is maintainable, free of bugs, and will run well at scale. This is a hint. This is where we are bottlenecked. Yea, you have a coding agent running on each PR but thats not enough. We need ways to confirm maintainability, free of bugs, and scalability without us reviewing every line. Else, our productivity will stagnate here. In the next level is where we start creating tools and processes that starts to break down this bottleneck...

Supervised Agent → Plans/Architecture: You are stretching and searching for how to author at a higher abstraction level. What are the artifacts here? How much of planning ends up in a Linear issue, PR comments, or checked into source? You wanna be authoring at the level of architecture, nailing down scalability concerns, API endpoints, and module contracts. Then handing everything off to the AI that you've seen write great code, when its setup to. You are solving the review bottleneck with better verification and loops. You have AI review code 3x+ times before you take a look (non-deterministic processes can converge). You tell it to explore the API it just wrote with curl and have it address any issues it finds until it is all fixed (verify and loop). You give it a headless chrome instance to drive around, take screenshots, and address any issues it finds. You realize two things (1) if you can provide helpful verification tools to the agent, like ability to exercise the API or view the frontend, then it can iterate improvements by it self and (2) stacking fresh context reviews and using adversarial prompting to converge on the best solution (given the tools/knowledge available). You've had to change how many developers are working on each project cause you are moving much faster. Two pizza team? More like two pizza slice teams. You are starting to wonder how to automate the agent to auto-merge the most straightforward changes, automate the prompt flows that you have found from dozens/hundreds of interactions, how best to manage planning docs, implementation plans, etc...

Autonomous/Oversight → User Stories/Features: You are now focused on 2 parts of the product process (1) building the product and (2) building the system that builds the product. What are all tools and systems that need to be in place so that the human is removed from more and more loops? You are trying to ~never author code. And the goal is to only review the most important changes and get a sight line on how to even stop reviewing code. You've moved almost all of your decision-making to pre-code generation. This is key since the ability for the AI to reason about issues require it to fit all the important parts in the first ~half of its context window. You are gaining a sense of feature size that is too big or too small for the whole spec driven development process. You are regularly running agents in parallel across projects and/or within a project. You've setup a process for you to run agents while your laptop is closed/off. You have a clear process for how you like to handle planning docs. You have your own spec driven development flow. You are managing features with dozens of tasks that form a dependency graph.

The Dark Factory → Outcomes: You are now on the frontier, writing tools as you need them. Uncovering, guessing at, and trying out the new foundations, new tools, new paradigms we'll use. You are starting to notice limitations of the tools that were built before agentic engineering, Jira, Linear, Github Actions, etc...

Current work across engineering is solidifying practices in level 3 and 4 while also exploring what level 5 could be. On top of all that, things will shift with every new improvement. Also, look for the agent harness features to move up thru the levels over time.