/rate-my-agentic-engineering-level

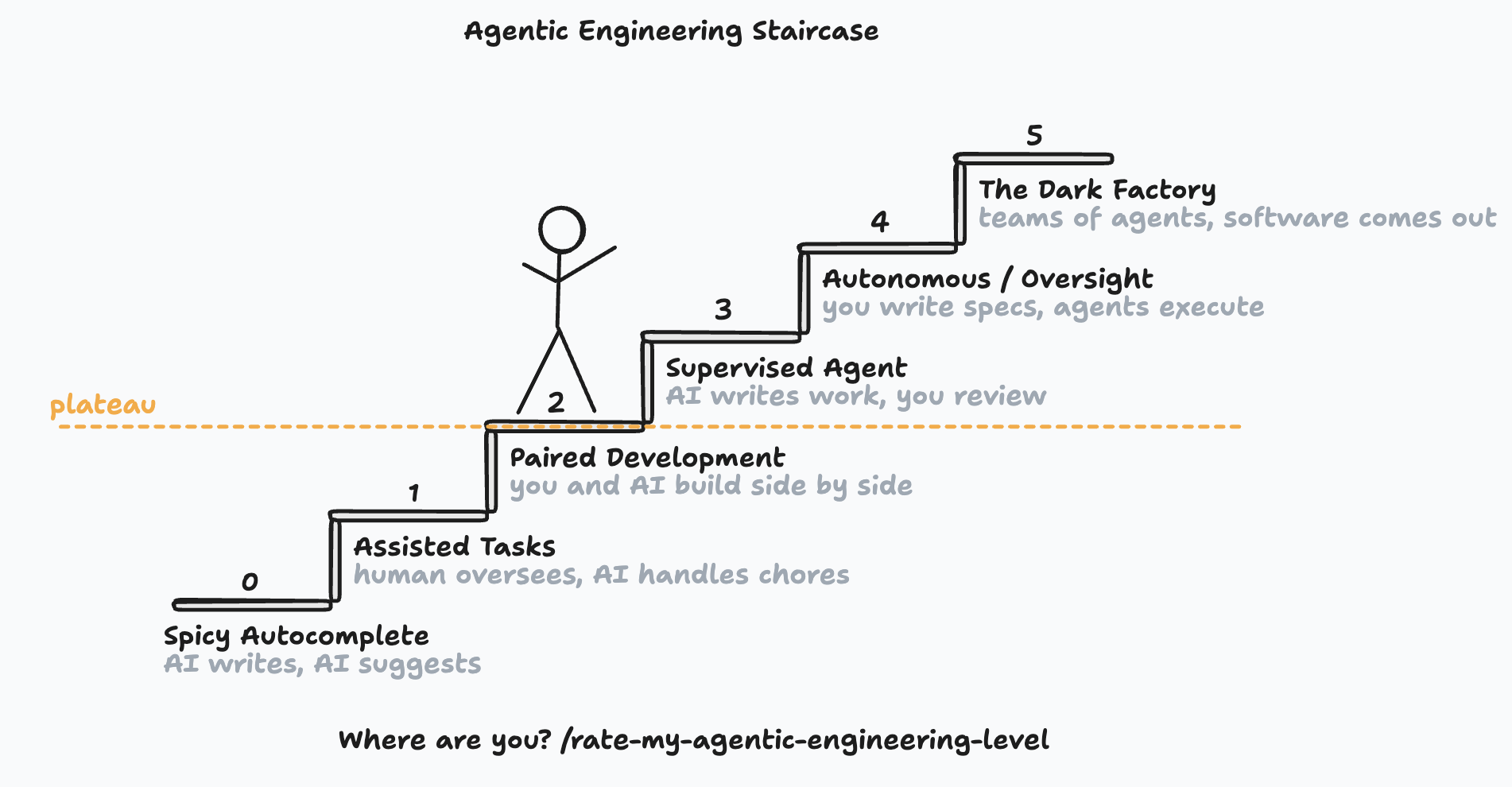

You are using ai coding agents everyday. You are getting more done than ever before. But where are you on the adoption curve? Have you plateaued? What can you do to unlock the next level?

You can use this skill to give you an idea:

/rate-my-agentic-engineering-level

The skill relies on a great tool from Jeff Emanuel called coding_agent_session_search the CLI command is cass which allows the agent driving the skill to search across all your coding agent sessions (Codex, Claude, Gemini, etc) quickly.

I ran the skill just now and here's how it evaluated me:

Your Agentic Engineering Level: 4 (Autonomous with Oversight)

Evidence

I looked at your cass history (786 sessions, 47k messages across 5 agent platforms), your project structure, skills, settings, and active codebases. Here's what stands out.

Level 4 is where you consistently operate:

- The forge pipeline (PRD, plan, task decomposition, implementation, docs) is your standard way of working on the-software-garden and other projects. 669 cass matches for "review the plan" and 373 for "don't implement." You treat spec work and implementation as separate phases with different review standards.

- Beads gives you dependency-aware task graphs, and you use them. 99 matches for bd ready. Agents pick up tasks based on what's unblocked, claim them atomically, and work in isolated git worktrees (3,251 cass matches for worktree-based agent work).

- polish-plan.dot fans out plan review to three AI personas in parallel, then consolidates their feedback. You've encoded review itself as an executable DAG.

- You run agents across Claude Code (582 sessions), Codex (177), Pi Agent, OpenClaw, and Amp. Skillshare syndicates 27 custom skills to 27+ tool targets. You've standardized how you work regardless of which agent you're driving.

- Your PRDs contain full architectural decisions, interface contracts, and phased delivery. AGENTS.md tells autonomous agents how to behave. Your job during implementation is to write requirements and review output.

Some Level 5 patterns are showing up, but they're not yet habitual:

- The Software Garden is an always-on agent runtime you're building: tmux session management, event bus, web dashboard, DOT engine for pipeline execution. There's a dark-factory-amendments.md in your plans. You're constructing the infrastructure that would make Level 5 your default.

- exe.dev (263 cass matches) gives you agents that run on remote VMs and survive closing your laptop. Cockpit tracks work across machines.

- The DOT graph fan-out for plan review runs without manual intervention per reviewer. You still read the consolidated output, but the execution is automated.

What's missing for Level 5:

- You kick off each pipeline stage manually. You type /forge run or dispatch agents by hand. Nothing watches for a new PRD and automatically starts the plan/decompose/execute chain.

- You review at every stage. Plans get multiple review passes, agent code output gets reviewed before merge. There's no "tests pass and monitoring is green, so it ships" loop.

- The Software Garden MVP plan was marked completed on 2026-03-19, but the runtime isn't yet running your own daily work. You're still the scheduler while building the thing that replaces you as the scheduler.

Where You're Stuck

You've built every piece of the pipeline, but you're still the cron job. Each agent run waits for you to start it, check the output, and trigger the next stage. Your throughput ceiling is your own attention. This makes sense since you're building the orchestration layer and using it at the same time, but it means the gap between 4 and 5 is "finish The Software Garden and dogfood it."

To Reach Level 5

- Make The Software Garden's event bus chain sessions together. When Agent A's session emits a completion event, the Garden should start Agent B's dependent session automatically. This is the scheduler you're currently replacing by hand.

- Turn the forge pipeline into something the Garden executes end-to-end. A new PRD committed to plans/ should trigger plan generation, task decomposition, and agent dispatch without you typing commands. You review async from the Greenhouse dashboard.

- Promote the DOT graph review pattern from "thing you invoke" to "gate in the pipeline." If the consolidated review flags critical issues, the pipeline stops. If it doesn't, the pipeline continues to implementation. Your involvement becomes exception-handling.

- Define a merge policy that doesn't require you to read every diff. Something like: types check, tests pass, linter clean, agent self-review found nothing, auto-merge to a staging branch. You review the aggregate before production.

- Shift your daily work surface to the Greenhouse dashboard on your phone. The target state: write a PRD, check back later to see progress, approve promotion to prod. The laptop-open coding session becomes the exception.

If you enjoyed the prose from the AI being mostly trope-free, you can thank Ossama Chaib for his tropes skill.