Don't Write Code. Don't Review Code. And Make it Good.

I was recently telling a friend that one of my 2026 goals, as a software engineer, is to get to a place with agentic engineering so that:

- I don't write code

- I don't review code

- And I still build helpful products with good code

This comes as a shock to Feb 2025 me.

How'd we get here?

Last spring, I was using LLMs in Cursor to write unit tests, autocomplete boilerplate code, and help me get up to speed on understanding new code bases.

LLMs wrote poor code. It made poor abstractions, it wrote things that were less maintainable, and didn't reuse good tools/libs/patterns already in the codebase.

GPT Pro was helpful if you uploaded your whole codebase into its context window by dragging and dropping huge txt files into the web/desktop ui.

Then 2 things happened:

1. We put LLM's in a loop with feedback

Depending on closely you were following X, this hit you in Spring/Summer 2025. This is any coding agent you’ve tried - Claude Code, Codex, Pi, etc

2. We passed some invisible quality threshold

One day, late in Nov 2025, you were deciding what pie to make for Thanksgiving, and the next, you were so hyped-up on Opus 4.5 you forgot to eat breakfast and lunch.

1. Loop with Feedback

Before - Let's wreck your flow

- Human asks LLM to write some code.

- LLM writes some code.

- Human runs tests. Tests fail.

- Human copy-pastes error back to LLM and asks for fix.

- LLM attempts code fix.

- Human runs tests. Tests pass.

- Human does manual QA on web.

- Human asks LLM to make PR.

- LLM makes PR.

- Human adds screenshots and scans PR.

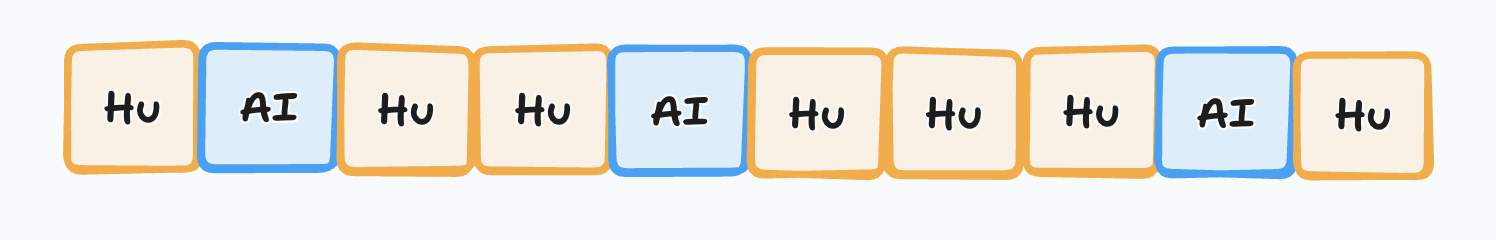

You have to intervene all the time. Lets map this visually, its more striking:

Hu = Human task/step

AI = LLM task/step

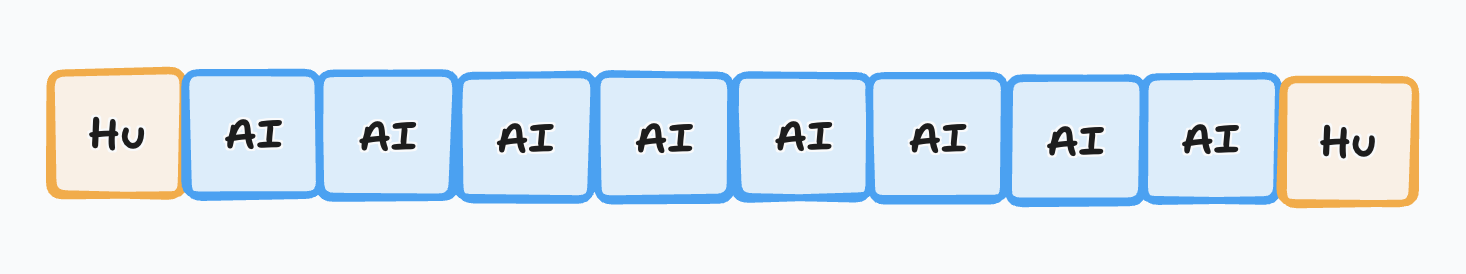

What we'd like to see is:

And thats exactly what loops with feedback gives us.

After - Let's honor your time and your flow

- Human asks LLM to write some code.

- LLM writes some code.

- LLM runs tests. Tests fail.

- LLM attempts code fix.

- LLM runs tests. Tests pass.

- LLM spins up dev server

- LLM takes a screenshot of its work.

- LLM submits PR (with commits and body in your preferred style).

- Human does smoke test and scans PR.

And that's only the beginning!

You should have an another LLM do a pass on that PR before its even worth your time to scan it.

Rinse and repeat. Keeping quality up and removing yourself from the loop.

Engineering by any other name would still smell as sweet.

This should all feel familiar. We were trained to look aggressively for patterns, processes, and procedures we could abstract and automate.

The new part is what we can automate.

Human out of the loop

Call it feedback engineering or maybe harness engineering or call on Ralph.

Like a multidimensional Roomba, the LLM will bounce off the feedback and guardrails until it has landed in the solution space that you wanted.

If it converged on something you don't like, don't edit the result, the code. That's like copy-pasting CSS styles instead of writing a class. It's like copy-pasting function body internals instead abstracting out a utility. It doesn't scale.

So what do you do? You refactor the system around the LLM so its impossible for the next run to converge on that spot again.

Sometimes you constrain solution space with tests and tools. And sometimes you constrain the solution space with architecture, library choices, and other project decisions.

How best to do this is still being figured out! Come explore with us.

2. The Invisible Threshold

This is hard convey in words or numbers alone (benchmarks didnt seem to catch it) since this came from fingerspitzengefühl or trusting in your personal experience (aka vibes).

But last, Nov with Opus 4.5, and later Dec with gpt-5.2-codex, the adherence and quality of code/work from the coding agent jumped past some invisible threshold that things feel like you were starting to soar - fewer tangents, fewer misunderstandings, smarter tool use, less cheating on tests, etc.

Some great engineers at OpenAI yesterday told me that their job has fundamentally changed since December. Prior to then, they could use Codex for unit tests; now it writes essentially all the code - Greg Brockman, President & Co-Founder OpenAI

Spotify says its best developers haven’t written a line of code since December, thanks to AI - TechCrunch

Where are we going?

This post isn't sufficient to pass off to any engineer and say "should do this too". It's all pretty hand wavy. And I wouldn't be convinced by this post to get up and change all my engineering habits based on this alone. I'll spend some time on that in future posts.

For now, look at what other engineers are saying:

For me personally it has been 100% for two+ months now, I don’t even make small edits by hand. - Boris Cherny, Creator of Claude Code

Just last summer, I spoke with Lex Fridman about not letting AI write any code directly, but it turns out part of this resistance was simply based on the models not being good enough at the time! I spent more time rewriting what it wrote, than if I’d done it from scratch. That has now flipped. -DHH, Creator of Ruby on Rails

I’ve got reports rolling in of companies concluding that not only should they not be writing code by hand, they should not be reviewing it manually either. - Steve Yegge, ex-Amazon, ex-Google, ex-Grab, etc

I Stopped Writing Code (And Started Getting More Done) - Giovanny Trematerra, Staff Engineer at Spotify

You get the idea.